Preamble

In the first part, we journeyed through millennia of knowledge sharing, from oral tradition to printing, from the Internet to infobesity, to understand how humanity has built, lost, and rebuilt its knowledge. We saw how each revolution expanded access to knowledge while creating new risks. Now we stand at the threshold of the latest revolution: Artificial Intelligence. Its promises are staggering. But so are its shadows.

1. The promise of AI: the universal synthesizer

The end of barriers

Where traditional search engines give us a list of links and leave us the work of synthesis, AI offers us a structured, contextual answer tailored to our question. It doesn't just point to books: it reads us the summary.

This synthesizing capability abolishes several historical barriers to knowledge.

The language barrier, first. AI can translate, summarize, and explain in any language. A French researcher can query Chinese publications without mastering Mandarin. A Brazilian student can access German-language scientific literature. The Tower of Babel of knowledge is crumbling.

The complexity barrier, next. AI can popularize, adapt its level of explanation to the interlocutor. It can explain quantum mechanics to a ten-year-old or a doctoral student, adjusting its vocabulary, analogies, and depth accordingly.

The time barrier, finally. What once required hours of documentary research can be obtained in seconds. AI compresses access time to knowledge spectacularly.

The universal guardian

One of the most exciting promises of AI is that of the personalized tutor. Historically, the best education was that of the individual preceptorship, i.e. a teacher for a student, capable of adapting his teaching to the strengths, weaknesses, rhythms and interests of his single disciple. But this privilege was reserved for princes and aristocrats.

AI offers the possibility of democratizing this pedagogical excellence. She can identify a learner's shortcomings, adjust their explanations, propose targeted exercises, answer questions with infinite patience, at any time of the day or night.

For a dyslexic child, a student whose mother tongue is not that of teaching, an adult in professional retraining, and for all those who are struggling to support the traditional education system, AI could represent an unprecedented opportunity.

Accelerating scientific discovery

Modern research illustrates a truth that we find difficult to accept: no human brain can master the totality of a complex subject on its own.

CERN's Large Hadron Collider (LHC) involves more than 10,000 scientists of more than 100 different nationalities. The discovery of the Higgs boson is not the work of a solitary Einstein, but of an unprecedented global collaboration. The sequencing of the human genome, climate change research, the development of RNA vaccines, and many others are all the result of distributed collective intelligence.

AI can amplify this collective intelligence. By cross-referencing data from millions of publications, it can help researchers connect points invisible to the human mind, such as comparing an observation in marine biology with a discovery in materials chemistry, suggesting a hypothesis that no specialist, confined to his field, would have formulated.

Recent breakthroughs such as AlphaFold's prediction of protein structure show that this promise is not just theoretical. AI can accelerate scientific discovery in ways we're just beginning to measure.

The promise is immense. But it's time to play devil's advocate.

2. The machine's shadows: the librarian under influence

If access is facilitated, reliability is threatened. Entrusting our knowledge to systems we don't fully understand carries risks we must face squarely.

The great illusion: AI doesn't understand

It is crucial to understand what generative AI actually does and especially what it does not do.

AI doesn't "understand" the world in the way we mean it. It predicts the next word. Trained on billions of texts, it has learned which words tend to follow which other words, in which contexts. It produces statistically plausible sequences which, most of the time, give impressive, but sometimes catastrophically wrong results.

An analogy may help grasp this distinction. Imagine an extraordinarily gifted parrot that had memorized all the dialogue from every movie about fires. It could shout "Fire!" perfectly convincingly. But it doesn't know what a fire is. It wouldn't flee from flames. It couldn't improvise an appropriate response to a novel situation

AI, similarly, can produce a text that looks like a scientific explanation with the appropriate vocabulary, structure, and tone without there being any "understanding" behind it. It does not distinguish a truth from a falsehood: it distinguishes a probable sequence from an improbable sequence.

Hallucination as the norm

This is why AI can assert falsehood with the same assurance as an established truth. The researchers call this phenomenon "hallucination," a term that emphasizes the gap between the apparent confidence of the response and its actual reliability.

AI can invent citations, attribute words to authors who have never said them, create bibliographical references from scratch that do not exist. It may mix real facts in such a way as to produce a false conclusion. It can overgeneralize, confuse correlation and causality, ignore essential nuances.

And it will do so with the same aplomb, the same fluidity, the same apparent authority as when it reproduces perfectly accurate information. For the untrained user, nothing distinguishes reliable response from manufacturing.

Flooding and the "Model Collapse"

With the cost of producing content having fallen to almost zero, we are witnessing a massive generation of texts, images, videos without expertise or verification. Content farms produce thousands of "SEO-friendly" articles without any competent human having proofread them. Entire sites are automatically generated, filled with plausible but hollow or erroneous texts.

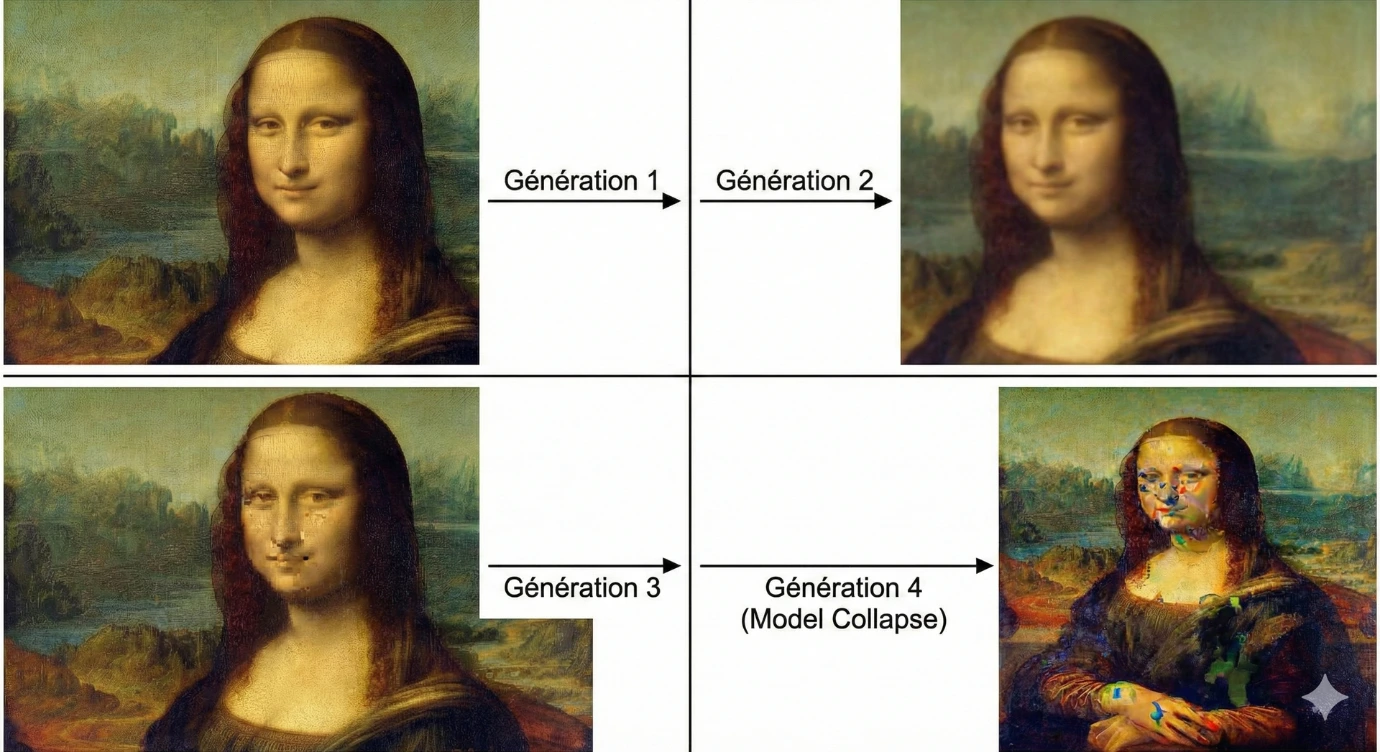

The ultimate danger is what researchers call the "Model Collapse". The phenomenon is easy to understand by analogy: imagine a photocopy of a photocopy of a photocopy. With each generation, the quality deteriorates, the details are lost, the noise accumulates.

If future AIs train on content generated by current AIs, which is increasingly likely, given the increasing proportion of synthetic content on the web, we risk a gradual degeneration of knowledge. The errors, biases, and approximations of generation N will be amplified in the N+1 generation, and then again in the N+2 generation. The circle closes, and the link with reality is stretched until it is broken.

The loss of the source: knowledge without origin

There is a risk that is less often mentioned, but which deserves attention: the loss of traceability.

When you consult Wikipedia, each statement is (in principle) accompanied by a reference. You can go back to the source, check for yourself, evaluate the reliability of the origin. When you use a traditional search engine, you access the original sites, so you can see who is speaking, in what context, with what potential interests.

The first generations of generative AI posed a major problem: they synthesized thousands of sources to render a unified, smooth, seamless response but without ever revealing where the information came from. The user could no longer assess reliability in terms of origin, nor could he distinguish scientific consensus from marginal opinion or even total hallucination.

Aware of this limitation, industry players have begun to integrate source citation features. Some AI engines now provide clickable references, allowing the user to verify the information at the source. This is a significant step forward, which partly responds to this concern..

However, this evolution highlights a new requirement: in a professional or scientific context, it is becoming imperative to favor AI tools that cite their sources and above all, to take the time to verify these sources. Because even when a reference is provided, there is no guarantee that the AI has correctly interpreted or faithfully summarized it. The quote reassures, but does not dispense with critical thinking.

Propaganda 2.0: industrializing lies

Beyond technical errors, there is malicious intent.

Propaganda has always existed. But its production was expensive: authors, printers, distributors were needed. AI breaks this lock. It makes it possible to produce disinformation on an industrial scale, personalized, targeted, in any language, on any subject, at almost zero marginal cost.

Worse: in a world where AI bases its "knowledge" on web statistics with the rule "if a lot of texts say the same thing, it's probably true", then it is enough to flood the web with false information to rewrite the reality perceived by the algorithm. Manipulation is no longer aimed only at humans: it is aimed at the machines that inform humans.

State actors, interest groups, extremists of all stripes now have a weapon of mass manipulation of unprecedented power.

Commercial bias: the truth of the highest bidder

While generative AI is replacing search engines as the single point of entry to information, a new threat is emerging: commercial capture.

Search engines already have this problem. Behind "organic" results are sponsored results, and search engine optimization (SEO) has become an industry that skews the hierarchy of information to the benefit of those who have the means to manipulate it.

With AI, the risk is getting worse. How can we ensure that AI does not bias its responses to satisfy commercial solicitations? Who controls what the AI "recommends"? Will the answers be the most accurate, or the most profitable for the service operator?

We risk seeing the birth of an "optimization of AI responses" where the "truth" displayed will be that of the highest bidder. The universal librarian could become a salesman in disguise.

3. Who controls the librarian?

The question of power

There is one angle that is often dismissed in discussions on AI: that of the concentration of power.

Developing cutting-edge generative AI requires colossal resources: billions of dollars of investment, titanic computing infrastructure, access to massive volumes of data, and teams of some of the world's most brilliant researchers. The most successful models, those that push the boundaries of what is possible, are mainly developed by a handful of technology companies, mostly American.

However, it would be inaccurate to speak of an absolute monopoly. A dynamic ecosystem of open source models has developed in parallel. Organizations such as Meta (with LLaMA), Mistral AI in France, DeepSeek in China, or community initiatives offer models whose code and sometimes weights are freely accessible. These alternatives, although generally less powerful than the state-of-the-art proprietary models, allow researchers, companies and individuals to experiment, adapt and deploy their own solutions without being entirely dependent on the giants of the sector.

This tension between concentration and openness is reminiscent of other moments in technological history, the web for example has itself oscillated between open protocols and closed platforms. The outcome is not written in advance.

The fact remains that the most advanced models, those that define the state of the art and capture most of the attention of the general public, remain in the hands of a small number of players. And even open source models require significant resources to train and deploy at scale, which de facto limits their accessibility to organizations with a certain amount of computing power.

We are therefore witnessing less an absolute oligarchy than a two-tier structure: on the one hand, a concentrated technological edge; on the other, an open ecosystem that democratizes access to lesser but real capabilities. The balance between these two poles will largely shape the future of the relationship between humanity and its knowledge.

The black box: opacity as the norm

These systems are, moreover, opaque. The most advanced language models are "black boxes" whose inner workings even their creators do not fully understand. What exact data were they trained on? What biases have they absorbed? What implicit rules govern their answers? These questions remain largely unanswered.

This opacity poses a fundamental democratic problem. We delegate crucial decisions, what information is reliable, what knowledge is worth passing, what perspective is legitimate, to systems that we cannot audit, challenge, or truly understand.

Cognitive dependence: atrophying the mind

A final risk, more diffuse but perhaps deeper: cognitive dependence.e.

Every tool we adopt transforms our relationship with the world and with ourselves. Writing, according to Plato, risked atrophying memory. The calculator has changed our relationship with mental arithmetic. GPS has changed the way we orient ourselves in space.

What happens when we delegate to AI not only access to information, but also its synthesis, analysis, and critical evaluation? What faculties are we in danger of allowing to atrophy for lack of exercise?

The ability to search, to sort, to doubt, to check, to think for oneself, these skills are like muscles. If we stop using them, they weaken. AI as an "intellectual crutch" could, in the long run, make us unable to walk alone.

Conclusion: the new challenge of critical thinking

From the word of the storyteller to the code of the algorithm, humanity has never ceased to seek to overcome oblivion and ignorance. Each revolution, writing, printing, the Internet, has widened the circle of those who could access knowledge, while creating new risks, new forms of manipulation, new imbalances of power.

Artificial Intelligence is part of this long history. It is a powerful tool that can help us manage the increasing complexity of our world, to overcome language and disciplinary barriers, to accelerate scientific discovery, to democratize access to quality education.

But it requires of us a skill that we may have neglected in the age of Google: critical thinking.

Faced with the machine that has the answer to everything, our challenge is no longer to access information but to verify that it is true. Our task is no longer to find knowledge but to distinguish it from its counterfeiting. Our responsibility is no longer just to inform ourselves, but to resist the temptation to delegate our judgment.

AI will not exempt us from thinking. On the contrary, it makes this requirement more imperative than ever.s.

Because in the end, knowledge has value only if it is true. And the truth cannot be delegated.